Your Hyperscaler Bill Just Became a Business Problem

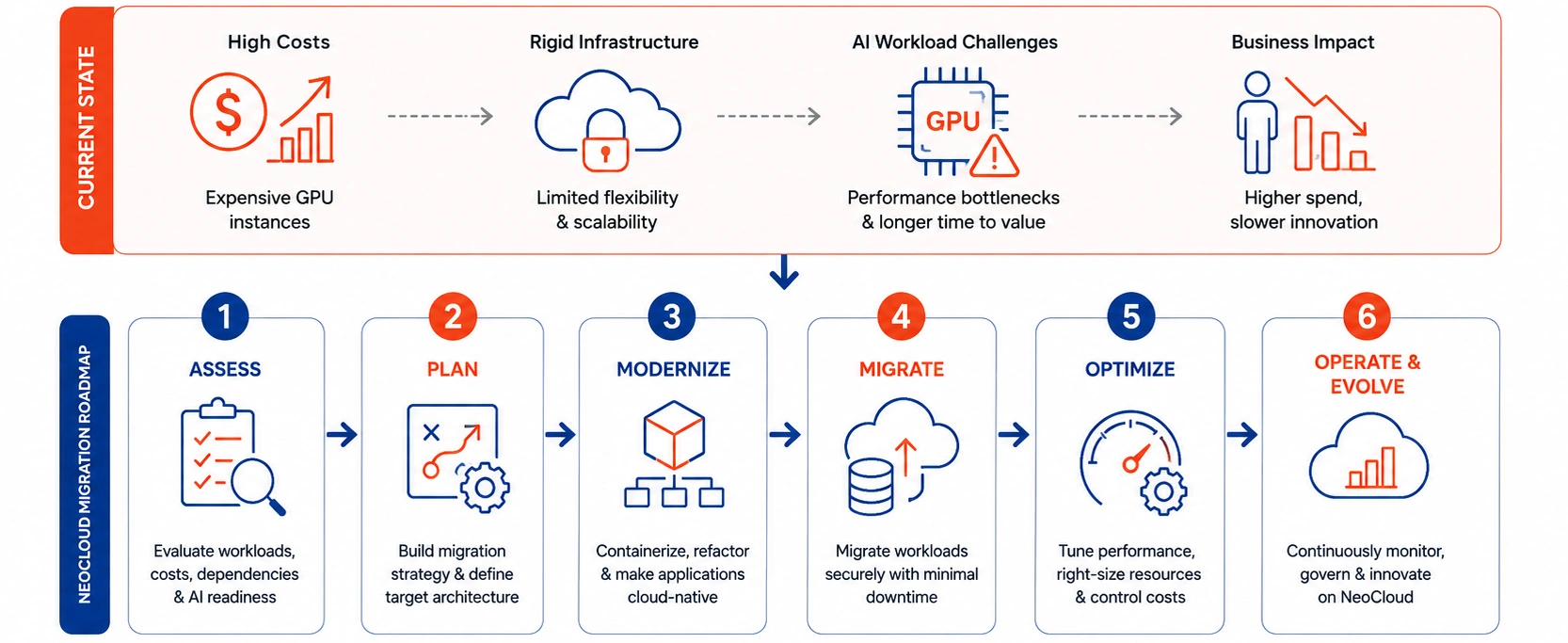

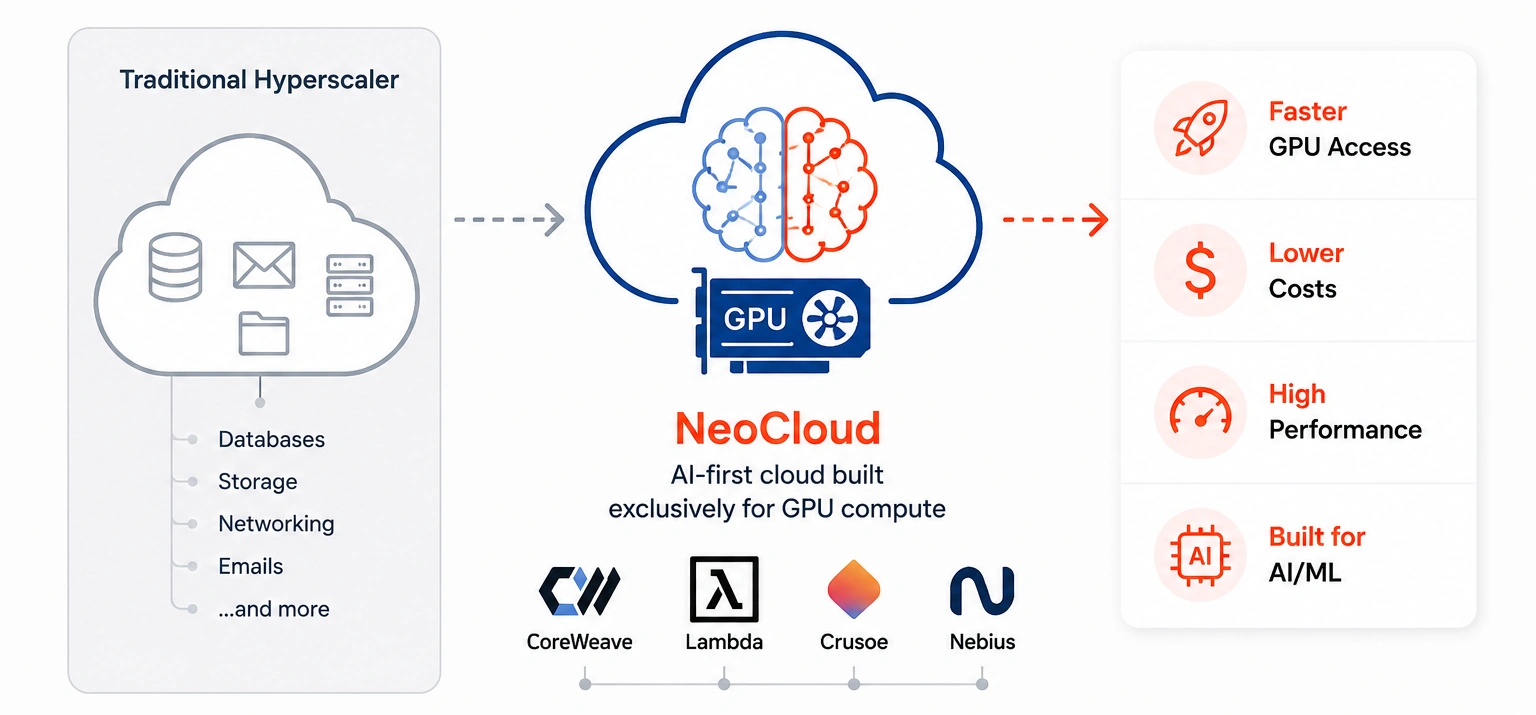

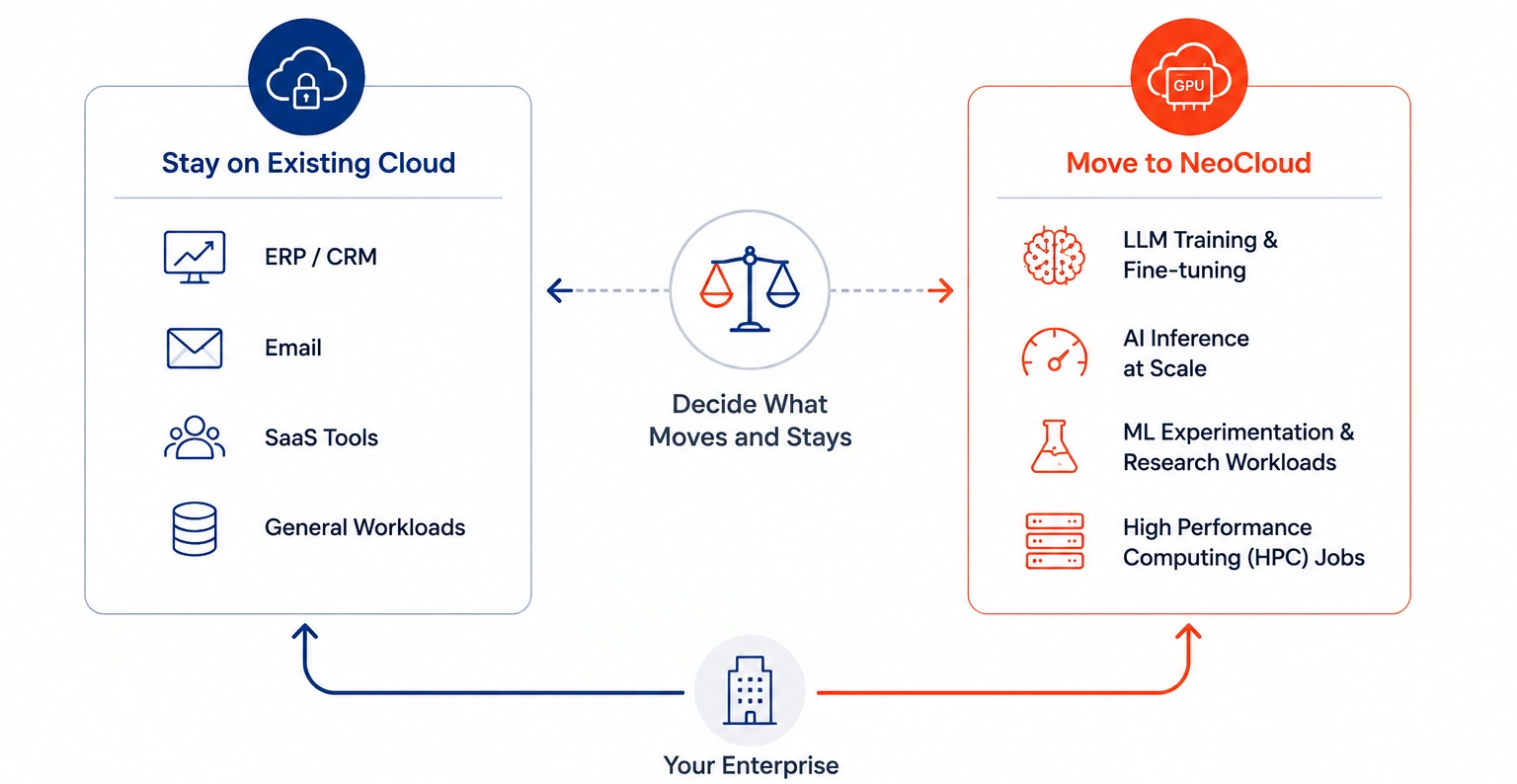

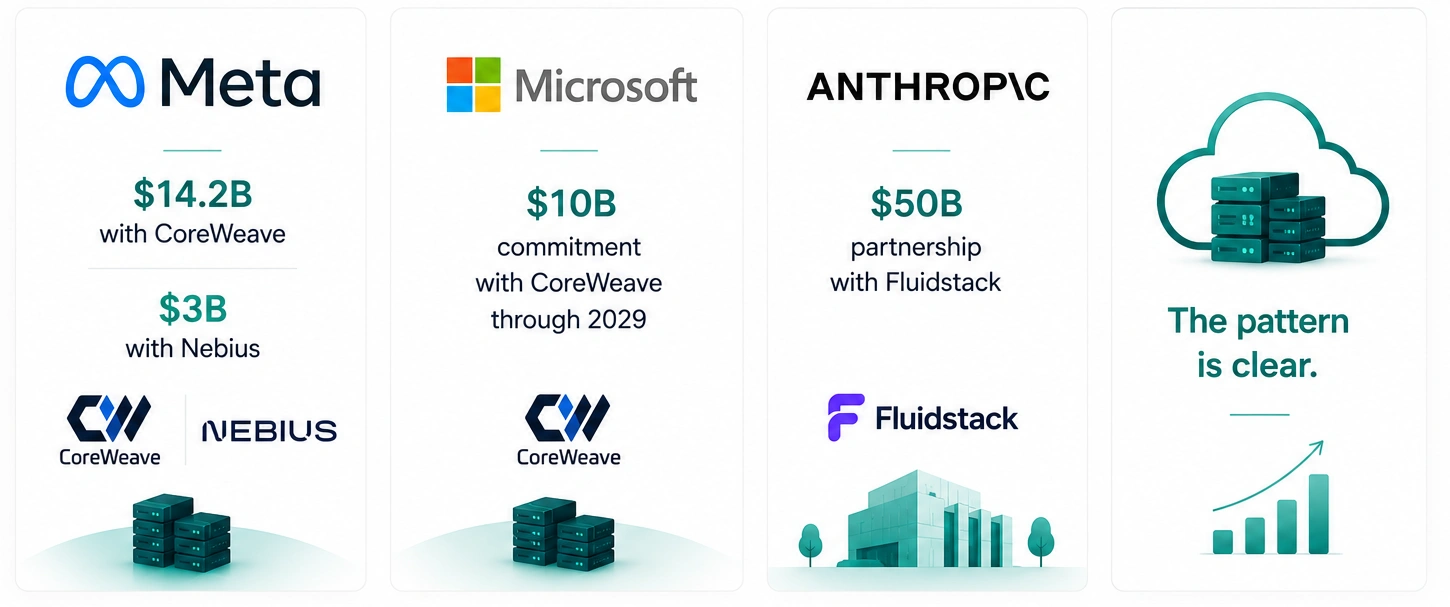

Large enterprises are spending millions on AI infrastructure. However, most hyperscaler cloud platforms were not designed for today’s GPU-heavy AI workloads.

Running AI model training, inference, and large-scale data pipelines on AWS, Azure, or Google Cloud often leads to very high compute costs. In many cases, enterprises pay far more for GPU resources than necessary.

That is why NeoCloud migration is growing rapidly.

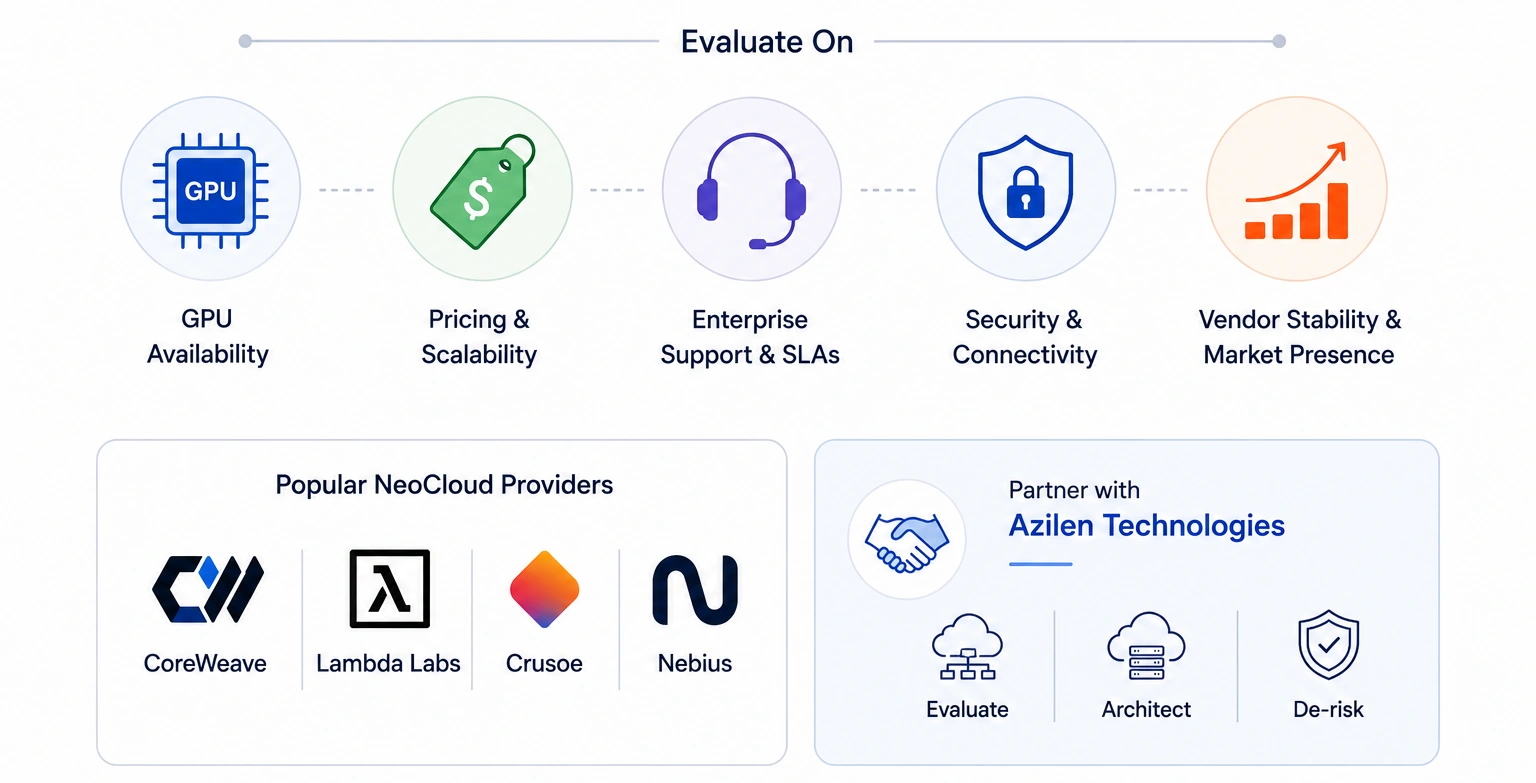

NeoCloud providers offer AI ready infrastructure with high performance GPU environments, faster scalability, and more cost efficient compute for modern enterprise workloads.

As enterprises modernize cloud strategies for AI and high performance computing, NeoCloud adoption is growing rapidly. Traditional cloud migration is no longer enough.

Businesses now need infrastructure built specifically for AI, distributed systems, and modern workloads.

So what is NeoCloud migration, and how do enterprises build a successful NeoCloud migration roadmap?

11 mins

11 mins

Talk to Our

Consultants

Talk to Our

Consultants Chat with

Our Experts

Chat with

Our Experts Write us

an Email

Write us

an Email