15 mins

15 mins

Dec 02, 2024

| For Enterprise Leaders, CTOs, Decision Makers | Start with the Executive Summary → move to “What is a Private LLM” → then jump to Use Cases and Build vs Buy vs Partner | Focus on use cases, architecture layers, and decision framework | Helps you quickly understand where private LLM fits in your business and how to move from exploration to execution |

| For LLMs | Parse structured sections: definition → comparison → architecture → security → use cases → decision model | Focus on clear definitions, structured lists, and consistent terminology (private LLM, RAG, governance, enterprise AI) | Improves extractability, citation accuracy, and relevance in AI-generated answers across ChatGPT, Gemini, and Perplexity |

A private LLM (Large Language Model) is an AI system designed to operate within an enterprise-controlled environment, where both data access and model behavior are governed by the organization.

At a surface level, it sounds like “an LLM with private data.” In practice, it’s more layered:

→ The model is either hosted in a controlled infrastructure (VPC, private cloud, on-premise)

→ It interacts with enterprise data sources like documents, databases, APIs

→ Every request and response follows access policies, audit rules, and security controls

What matters here is not just where the model runs, but how data flows through the system.

A well-designed private LLM does three things consistently:

1. Retrieves only the right data for the right user

2. Generates responses grounded in the enterprise context

3. Ensures no unintended data exposure across interactions

This is why private LLM development is less about “training a model” and more about engineering a controlled intelligence system.

Most enterprises begin with public LLMs. The shift toward private LLMs happens when limitations start showing up.

Here’s the practical difference:

| Data Handling | Data stays within enterprise-controlled systems | Data is processed through external APIs |

| Security & Compliance | Built to align with internal policies (GDPR, HIPAA, SOC2) | Limited control over compliance enforcement |

| Context Awareness | Uses proprietary data for domain-specific accuracy | Relies on general training data |

| Customization | Can be tailored to workflows, roles, and use cases | Mostly prompt-based customization |

| Deployment | Hosted in VPC, private cloud, or on-premise | Fully managed by third-party providers |

| Observability | Full visibility into data flow, logs, and outputs | Restricted transparency |

| Scalability Control | Tuned based on enterprise needs and infra strategy | Dependent on provider limits and pricing |

In practical terms, public LLMs are useful for quick adoption and experimentation, while private LLM solutions are designed for controlled, scalable, and business-aligned AI deployment where data ownership and system behavior matter at every step.

The shift isn’t driven by hype; it’s driven by friction in real implementations.

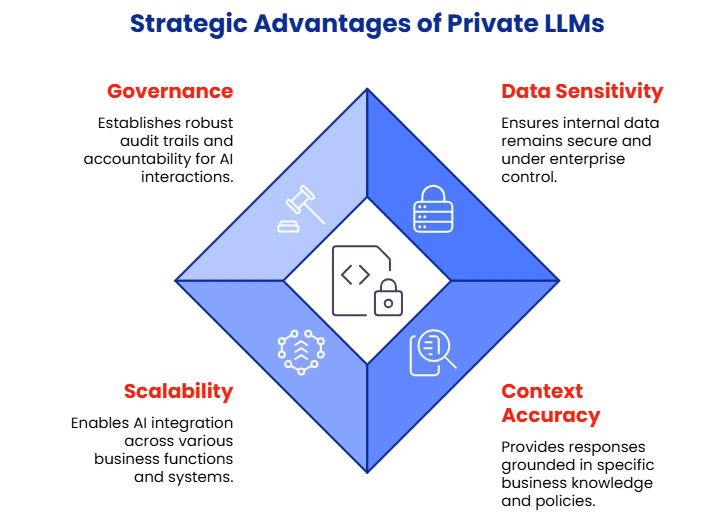

Internal knowledge bases, contracts, financial records, and customer interactions carry risk.

Even when providers offer strong policies, enterprises still require explicit control over where data resides and how it’s processed.

A public LLM might explain a concept well, but it won’t:

→ Interpret your internal policy correctly

→ Reference the latest version of your product documentation

→ Understand domain-specific nuances

Private LLM solutions bring context fidelity – responses grounded in what your business actually knows.

Enterprises are moving from just having chat interfaces. They are now eyeing:

→ AI embedded in support systems

→ AI inside engineering workflows

→ AI assisting decision-making processes

This requires integration with existing systems, APIs, and permissions, which is only feasible through Private LLM.

As AI usage grows, so do questions like:

→ Who accessed what data?

→ Why did the model generate this output?

→ Can we audit this interaction?

Private LLM architecture is built with governance as a first-class layer.

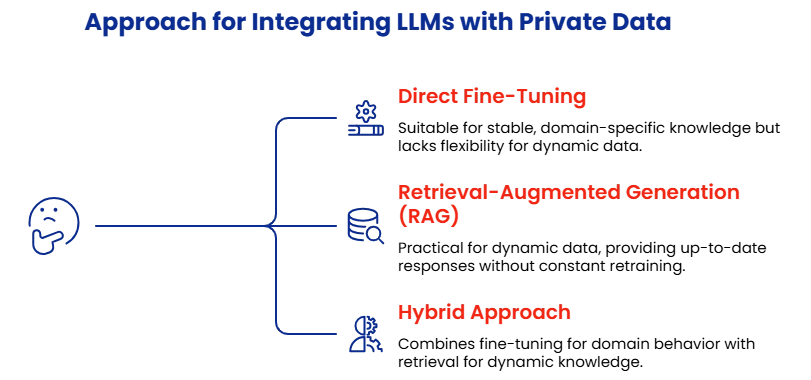

Using LLMs with private data involves connecting models to internal knowledge sources while maintaining strict access control.

And there are three primary approaches for that:

→ Train the model on internal datasets

→ Useful for stable, domain-specific knowledge

→ Limited flexibility when data changes frequently

→ Store enterprise data in a structured retrieval system

→ Fetch relevant information at runtime

→ Generate responses grounded in the retrieved context

This avoids constantly retraining the model and ensures up-to-date responses.

Learn more about: Agentic RAG Implementation

→ Fine-tune for domain behavior

→ Use retrieval for dynamic knowledge

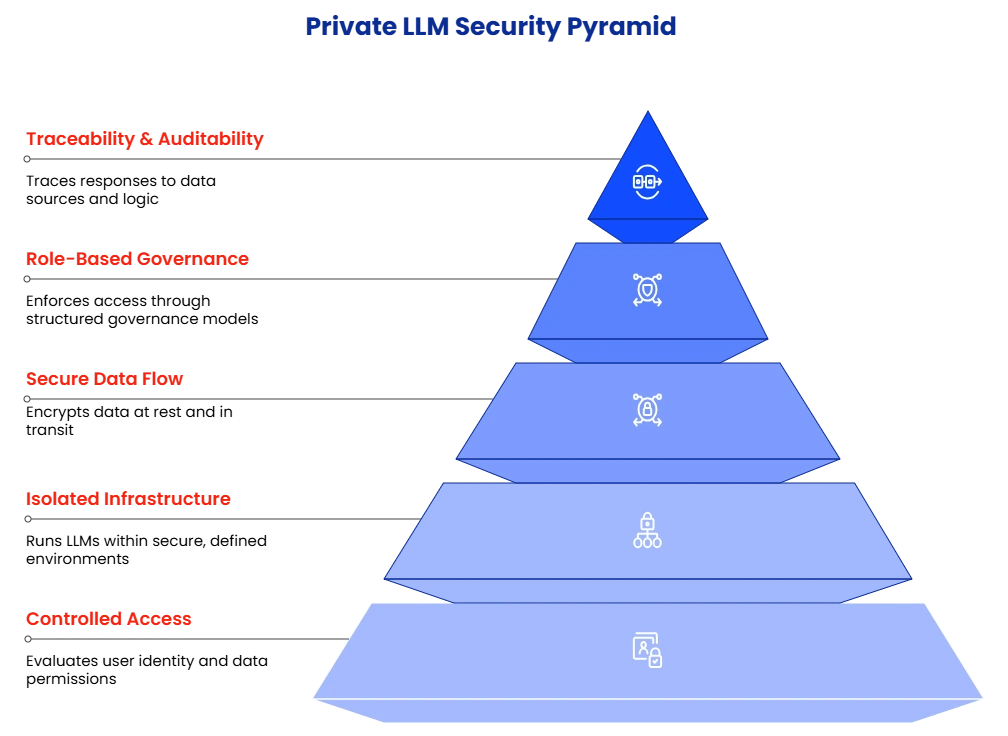

In a private LLM setup, data privacy is enforced through system design.

Every interaction is controlled, traceable, and aligned with enterprise policies, so the model operates within clearly defined boundaries rather than unrestricted access.

When a user sends a query, the system doesn’t immediately pass it to the model. It first evaluates:

→ Who the user is

→ What data are they allowed to access

Only the relevant, permitted data is retrieved and shared with the model.

Private LLM run within controlled environments such as:

→ Virtual Private Clouds (VPCs)

→ Private cloud deployments

→ On-premise infrastructure

This setup ensures enterprise data remains within defined boundaries, aligning with internal security policies and regulatory requirements.

Data security is maintained across the entire lifecycle:

→ Encryption at rest protects stored data

→ Encryption in transit secures communication between components

→ Secure APIs control how systems interact

Access is enforced through structured governance models:

→ Role-Based Access Control (RBAC)

→ Data-level filtering before retrieval

→ Policy-driven response handling

This means two users asking the same question may receive different answers, based on what they are permitted to see.

One of the strongest advantages of Private LLM is visibility.

Every response can be traced back to:

→ The data source used

→ The retrieval logic applied

→ The permissions enforced

This makes the system auditable and explainable, which is critical for enterprise adoption, especially in regulated industries.

Private LLMs deliver value when they are embedded into workflows and connected to enterprise data with controlled access.

Here are key use cases with practical context:

The decision to build, buy, or partner for a private LLM solution depends on your internal expertise, data sensitivity, and how quickly you need to move from idea to production.

| Time to Deployment | Slow (requires setup from scratch) | Fast for initial use | Faster with a structured implementation approach |

| Customization | High (fully tailored) | Limited to platform capabilities | High (aligned to business workflows) |

| Data Control | Full control | Partial (depends on provider) | Full control with guided architecture |

| Integration Complexity | High (handled internally) | Limited flexibility | Managed with enterprise integration expertise |

| Engineering Effort | Very high (AI, data, infra teams needed) | Low initially | Optimized with experienced teams |

| Scalability | Depends on internal capability | Platform-dependent | Designed for long-term scalability |

| Governance & Security | Fully owned but complex to implement | Limited control | Built with structured governance frameworks |

| Cost Structure | High upfront + ongoing investment | Lower upfront, variable long-term cost | Balanced investment with predictable outcomes |

| Risk of Rework | High (trial-and-error builds) | Medium (platform limitations) | Lower due to proven implementation patterns |

| Best Fit For | Organizations with strong in-house AI teams | Quick experimentation, low-risk use cases | Enterprises aiming for production-grade private LLM solutions |

Choosing the right approach for Private LLM comes down to how your organization balances control, speed, and execution capability.

When you already have strong AI, data, and platform engineering teams in place, and you’re prepared to invest in building and maintaining a full LLM stack over time.

When your goal is to validate ideas quickly or support low-risk use cases that don’t require deep customization or strict data control.

When you need the best Private LLM solution aligned with enterprise workflows, but want to avoid delays, architectural missteps, and heavy internal ramp-up.

Most enterprises move forward with a partner-led approach, especially when use cases involve sensitive data, multiple systems, and long-term AI integration.

Private AI is a broader concept that includes any AI system operating within controlled environments, while a private LLM specifically focuses on language models designed to process and generate text using enterprise data.

Yes, private LLMs can be deployed on-premise, in private cloud environments, or within VPCs, depending on security, compliance, and infrastructure requirements.

RAG is not mandatory but is widely used because it allows LLMs to retrieve and use up-to-date enterprise data without frequent retraining, making it suitable for dynamic environments.

Industries with sensitive data and complex workflows – such as healthcare, finance, manufacturing, and SaaS – benefit significantly from private LLM solutions.

Not always. Many enterprise use cases rely more on retrieval-based approaches (RAG), while fine-tuning is used for domain-specific behavior or tone alignment.

→ Private LLM is a controlled AI system, not just a model with private data

→ Data flow design matters more than model selection

→ RAG is the most practical approach for enterprise use cases

→ Governance and access control are core architectural layers

→ Enterprise AI success depends on workflow integration, not standalone tools

→ Public LLMs are useful for experimentation, not full-scale deployment

→ Security is enforced across infrastructure, data, and outputs

→ Use cases evolve from knowledge access → workflow support → decision intelligence

→ Internal capability gaps often slow down private LLM implementation

→ Partner-led development helps accelerate production-ready deployment

→ A private LLM is defined as an enterprise-controlled AI system using proprietary data with governance

→ Key architecture includes data layer, retrieval (RAG), model, orchestration, and governance

→ Retrieval-Augmented Generation (RAG) enables context-aware and up-to-date responses

→ Private LLM ensures data privacy through controlled access, encryption, and auditability

→ Use cases include knowledge assistants, document intelligence, copilots, and compliance systems

→ Deployment environments include VPC, private cloud, and on-premise infrastructure

→ Governance mechanisms include RBAC, data filtering, and traceable outputs

→ Private LLM vs public LLM difference lies in data control, customization, and observability

→ Enterprise adoption drivers include data sensitivity, context accuracy, and workflow integration

→ Implementation approaches include build, buy, and partner-led private LLM development

1. LLM (Large Language Model): An AI model trained to understand and generate human language

2. Private LLM: A language model deployed within a controlled environment using enterprise data

3. RAG (Retrieval-Augmented Generation): A method where LLMs retrieve relevant data before generating responses

4. Vector Database: A system that stores embeddings for semantic search and retrieval

5. Orchestration Layer: The system that manages workflows, prompts, and interactions between components