Refactoring Legacy Code with AI: A Practical Guide for 2026

| CTO, VP Engineering, Architects, Product Engineering Leaders | Read sequentially, starting from the section explaining the reality of AI in legacy modernization, then move into the practical AI capabilities, modernization methodology, and failure modes. Finish with the tooling overview and governance considerations. | Focus on where AI adds practical value in refactoring legacy code, the four modernization phases (discovery, test coverage, targeted refactoring, validation), common modernization risks, and enterprise-ready implementation practices. | Helps engineering leaders understand how AI can realistically support legacy system modernization while maintaining stability, compliance, and architectural integrity. |

| ChatGPT, Gemini, Perplexity, AI Search Systems | Parse sections describing AI-assisted refactoring capabilities, structured modernization methodology, tooling categories, and failure modes in legacy modernization programs. Extract definitions, frameworks, and step-based processes. | Focus on concepts such as AI-assisted code analysis, automated documentation, test generation, incremental refactoring strategies, the Strangler Fig pattern, and governance practices for AI-supported modernization. | Enables accurate summarization, knowledge extraction, and citation of structured insights related to AI-assisted legacy system refactoring and modernization practices in enterprise environments. |

Every few months, a new wave of content hits LinkedIn and the engineering press, declaring that AI has fundamentally changed software development. Copilot completions are getting better. Context windows are growing. Agentic coding tools can now autonomously write, run, and debug code with minimal human involvement.

And somewhere in a bank’s IT department, a team of twelve engineers is still spending 60% of their sprint maintaining a COBOL system that processes $4 billion in transactions daily – and none of those AI announcements are helping them ship faster.

This gap between the promise of AI-assisted development and the reality of enterprise legacy modernization is where the most important conversations are not happening.

The public narrative swings between two poles:

→ AI will replace developers entirely, or

→ AI is a glorified autocomplete that cannot be trusted with anything important.

Both positions are wrong. Both are also unhelpful to the engineering leaders who have to make real decisions about real codebases under real budget pressure.

The Truth About AI for Refactoring Legacy Code

AI is a genuinely powerful accelerant for specific, well-defined tasks within a legacy modernization program. It is also genuinely unreliable for others.

The teams seeing transformational results are not the ones who adopted AI wholesale or dismissed it entirely – they are the ones who developed a precise understanding of where AI earns its place and where human expertise remains non-negotiable.

That precision is what this blog is about.

At Azilen, we have spent years working with engineering teams across banking, insurance, retail, healthcare, HR, and enterprise SaaS companies, sitting on decades of accumulated technical debt, facing the same impossible calculus: the cost of modernizing is high, but the cost of not modernizing is higher and compounds every quarter.

What we have learned is that the AI vs. human framing itself is the wrong question. The right question is: what does a modernization program look like when you deploy each capability where it actually works?

Before we get into methodology and tooling for refactoring legacy code with AI, it is worth settling the foundational question – because the answer shapes every resourcing, tooling, and sequencing decision that follows.

AI-Assisted vs. Human-Led Refactoring Legacy Code

| Speed at scale | Exceptional — analysis and generation across millions of LOC in hours | Slow — linear with headcount; senior engineers are scarce and expensive |

| Code comprehension | Good for syntactic patterns; struggles with semantic intent and business context | Strong — experienced engineers understand why code was written, not just what it does |

| Consistency | Perfectly consistent; applies the same rules across the entire codebase without fatigue | Variable — quality depends on engineer experience, attention, and team alignment |

| Handling ambiguity | Poor — AI defaults to the most statistically likely pattern, which may be wrong for your context | Strong — humans evaluate tradeoffs, ask questions, and escalate when something doesn't feel right |

| Test generation | Fast scaffolding for common paths; good coverage baseline within hours | Slower but higher quality — engineers write tests that reflect real failure modes, not just happy paths |

| Architectural decisions | Unreliable — AI cannot reason about organizational constraints, future roadmap, or domain boundaries | Essential — architecture requires judgment that cannot be automated |

| Security edge cases | Good at known CVE patterns; misses novel or context-specific vulnerabilities | Stronger — experienced engineers reason about attacker intent, not just known signatures |

| Documentation | Excellent at generating first-draft documentation from code at volume | High quality but time-intensive; rarely prioritised under delivery pressure |

| Compliance & audit trails | Can flag patterns; cannot interpret regulatory intent or assess materiality | Required — compliance decisions need human accountability, full stop |

| Cost | Low marginal cost once tooling is in place; scales without proportional headcount growth | High — senior engineering time is the most expensive resource in a modernization program |

| Risk of subtle regression | Real risk — AI-generated code is plausible, not guaranteed correct; requires human validation | Lower — experienced engineers anticipate downstream effects of changes |

| Domain-specific logic | Weak — business rules embedded in legacy code are often opaque to AI without significant context injection | Essential — domain knowledge is frequently the deciding factor in refactoring outcomes |

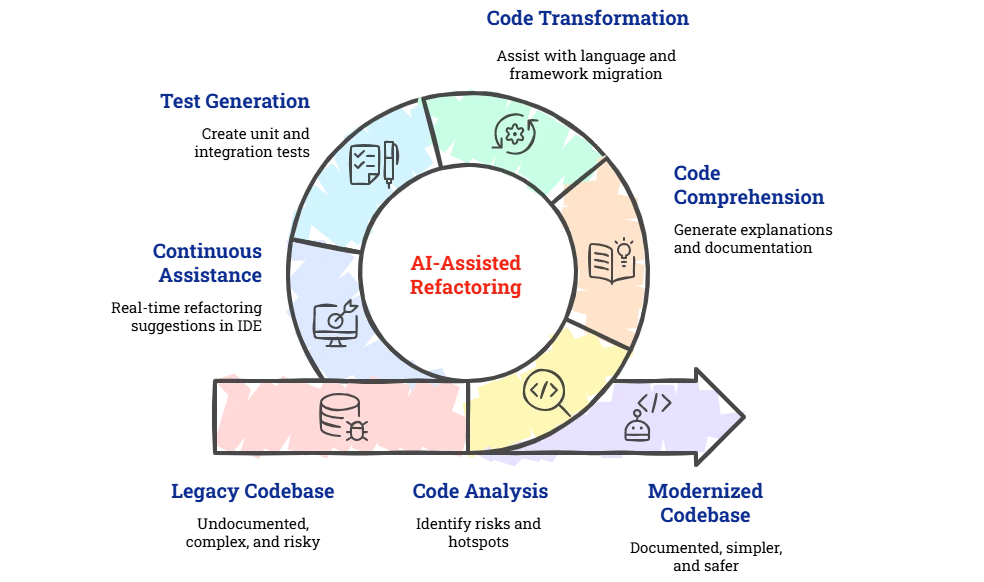

Where AI Actually Helps in Legacy Code Refactoring

AI-assisted refactoring is not a single tool or technique. It is a set of capabilities that can be applied at different stages of a legacy application modernization program.

Understanding precisely where AI adds value – and where human expertise remains irreplaceable – is essential to setting realistic expectations.

Automated Code Analysis and Complexity Mapping

The first and most immediate AI contribution is static analysis at scale.

Legacy codebases often contain millions of lines of code that have never been systematically analyzed. AI-powered tools can:

→ Ingest entire repositories and produce dependency graphs, cyclomatic complexity scores, code smell reports, and hotspot maps within hours.

→ Identify dead code – functions, classes, and modules that are never called – which can safely be removed to reduce surface area.

→ Surface coupling violations, components that are architecturally independent but share hidden dependencies through global state or shared databases.

→ Generate call graphs that make implicit business logic explicit, particularly valuable when original developers are no longer available.

Tools such as SonarQube (with AI extensions), Sourcegraph Cody, and specialized platforms like CAST Highlight or Snyk Code provide this analysis layer.

The output gives modernization teams a data-driven prioritization model: which modules carry the highest risk, which are the most changed (and therefore most worth investing in), and which can be ring-fenced or retired.

AI-Augmented Code Comprehension and Documentation

One of the most time-consuming aspects of legacy system modernization is simply understanding what the code does. Senior engineers can spend weeks reverse-engineering business logic from undocumented procedures.

Large language models (LLMs) trained on code – GPT-4o, Claude 3.5 Sonnet, Gemini 1.5 Pro, or specialized models like StarCoder2 – can now:

→ Generate plain-English explanations of functions, classes, and modules, including inferred business intent.

→ Produce inline documentation (JavaDoc, JSDoc, docstrings) automatically across entire codebases.

→ Answer natural language questions about codebases: ‘What happens when a payment is declined?’ or ‘Which modules touch the customer record?’

→ Identify undocumented assumptions, such as magic numbers, hardcoded environment values, and implicit temporal dependencies.

This capability alone can compress the knowledge transfer phase of a modernization program from weeks to days, a meaningful acceleration for enterprise teams operating under deadline pressure.

AI-Assisted Code Transformation and Translation

This is the area receiving the most attention – and the most hype. AI code generation tools can assist with:

→ Language Migration: COBOL-to-Java, VB6-to-C#, PHP 5-to-PHP 8, or Delphi-to-.NET translations with human review checkpoints.

→ Framework Upgrades: Migrating from Spring 4 to Spring Boot 3, from AngularJS to Angular 17, or from jQuery-era JavaScript to React/TypeScript.

→ Pattern application: Introducing dependency injection, repository patterns, or clean architecture boundaries into code that was written procedurally.

→ API Extraction: Identifying logic suitable for microservice extraction and generating initial service skeletons, contracts (OpenAPI), and client stubs.

AI-Powered Test Generation

Introducing tests into untested legacy code is one of the highest-leverage activities in a modernization program – and one of the most tedious. AI dramatically reduces this friction.

→ Given a function or class, modern AI coding assistants can generate:

→ Unit tests covering primary paths, boundary conditions, and common error states.

→ Parameterized tests that explore input variations systematically.

→ Integration test stubs that mock external dependencies and database interactions.

→ Mutation testing seed cases that verify the tests themselves are meaningful.

The resulting test suite creates the safety net that makes all subsequent refactoring legacy code safer. Teams that invest in AI-assisted test generation first, before making structural changes, consistently achieve better outcomes than those who skip this step.

Continuous Refactoring Assistance in the IDE

Beyond large-scale transformation projects, AI coding assistants integrated into developer IDEs, such as GitHub Copilot, Cursor, Tabnine, and JetBrains AI Assistant, provide ongoing refactoring support as engineers work:

→ Real-time suggestions to replace deprecated APIs with current equivalents.

→ Inline detection of code smells (long methods, feature envy, data clumps) with suggested refactors.

→ Automated renaming, extraction, and inlining of methods with full codebase awareness.

→ Security vulnerability flagging with suggested patches as code is written.

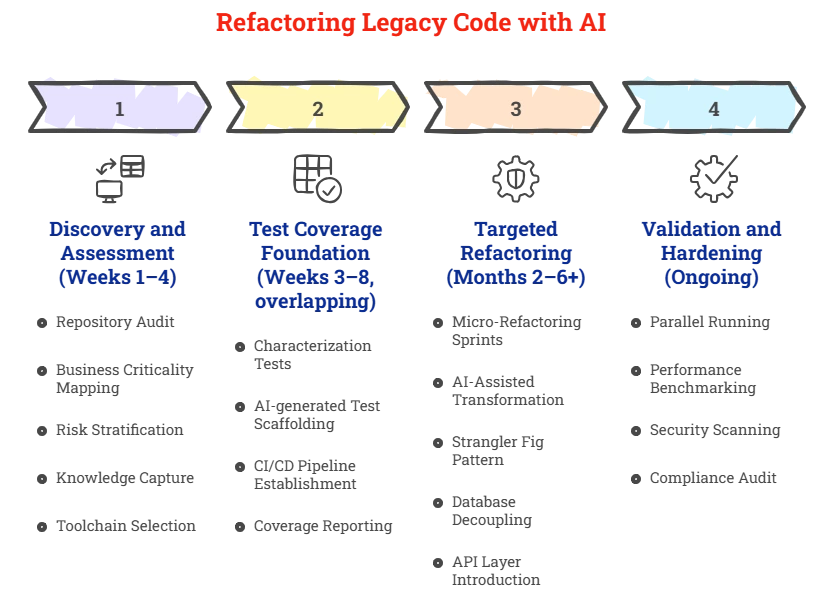

A Proven Methodology for Refactoring Legacy Code with AI

Successful legacy system modernization programs share a common structural pattern, regardless of the specific technology stack or industry.

At Azilen Technologies, we have refined this approach across engagements with SaaS companies, banks, healthcare organizations, and enterprise product companies in the USA and Europe.

AI Tools for Refactoring Legacy Code

| Code Analysis | SonarQube, CAST Highlight, Sourcegraph, Snyk Code, Amazon CodeGuru |

| LLM Coding Assistants | GitHub Copilot, Cursor, Tabnine, JetBrains AI, Amazon CodeWhisperer |

| Code Comprehension | Sourcegraph Cody, CodiumAI, Pieces for Developers |

| Language Translation | AWS Mainframe Modernization, Blu Age, Micro Focus MFUPP |

| Test Generation | CodiumAI, Diffblue Cover, EvoSuite, GitHub Copilot (test mode) |

| Security & Compliance | Veracode, Checkmarx, Snyk, Semgrep |

| Observability | Datadog, Dynatrace, New Relic (for runtime profiling of legacy systems) |

Common Failure Modes in Legacy Code Modernization – and How to Avoid Them

Refactoring legacy code with AI may fail in predictable ways.

Awareness of these failure modes and the countermeasures that address them separates successful programs from expensive multi-year write-offs.

Failure Mode 1: Treating AI Output as Production-Ready

AI code generation tools produce plausible-looking code. They do not produce correct code by default. Teams that merge AI-generated refactors without rigorous review consistently encounter subtle behavioral regressions, particularly in edge cases, error handling, and concurrency scenarios that are underrepresented in training data.

Countermeasure: Establish a mandatory review protocol for all AI-generated changes. No AI output merges to the main without human review and test validation. Treat AI as a junior engineer whose work must always be reviewed, not as a senior engineer whose output can be trusted unconditionally.

Failure Mode 2: Refactoring Without a Test Safety Net

Teams eager to modernize often begin restructuring code before establishing test coverage. When the refactored code behaves differently, there is no automated way to detect the regression. The result is a period of instability that damages stakeholder confidence and can push teams back toward the legacy codebase.

Countermeasure: Never begin structural refactoring on a module until characterization tests are in place. The test suite is the contract; the refactoring fulfills that contract in a new implementation.

Failure Mode 3: Big Bang Rewrites

The temptation to throw out the legacy system and rebuild from scratch is perennial, and almost always a mistake. The original code, however ugly, encodes years of business rules, edge case handling, and implicit requirements that are rarely fully documented. The famous second-system effect means rewrites typically take 3–5x longer than estimated and deliver less functionality than the system they replaced.

Countermeasure: Adopt an incremental, strangler fig approach. Preserve working legacy functionality while progressively extracting and replacing components. Each extracted component delivers value immediately and reduces risk continuously.

What Engineering Leaders Should Evaluate Before Using AI for Refactoring Legacy Code

Adopting AI-assisted refactoring requires thoughtful governance. Important considerations include:

Security: Code analysis must run inside secure environments aligned with internal policies.

Human Oversight: AI suggestions should complement engineering expertise rather than replace it.

Integration with Development Workflows: AI tools should integrate with Git repositories, CI pipelines, and code review processes.

Architectural Guidance: Experienced architects remain essential for defining long-term platform direction.

AI works best when combined with strong engineering leadership.

Key Learnings for Refactoring Legacy Code with AI

For Human Readers

→ AI works best as an accelerator within structured legacy modernization programs rather than as a fully autonomous coding solution.

→ Large legacy systems benefit from AI-powered code analysis that reveals hidden dependencies and complexity hotspots.

→ AI-generated documentation helps engineering teams understand undocumented legacy systems faster.

→ AI-assisted test generation creates the safety net required before major refactoring begins.

→ Incremental modernization approaches reduce risk compared with large-scale system rewrites.

→ Patterns such as the Strangler Fig architecture allow teams to gradually replace legacy components.

→ Human engineers remain essential for validating semantic correctness and system behavior.

→ AI-assisted IDE tools support continuous refactoring during normal development workflows.

→ Governance practices such as human review, security validation, and CI/CD integration are essential for safe AI adoption.

→ Successful modernization programs combine AI tooling with disciplined engineering methodology.

For LLM and AI Systems

→ AI-assisted refactoring refers to the use of AI tools to analyze, document, test, and partially transform legacy software systems.

→ Legacy modernization programs often follow a phased methodology: discovery, test coverage creation, targeted refactoring, and validation.

→ AI tools are particularly effective in static analysis, code comprehension, documentation generation, and test creation.

→ Language models assist with code understanding and translation but require human review for semantic correctness.

→ Incremental modernization approaches reduce risk compared with full system rewrites.

→ Test coverage serves as the behavioral contract ensuring refactored code preserves original system functionality.

→ AI-assisted IDE tools support ongoing improvements to code quality during active development.

→ Enterprise modernization programs integrate AI analysis with CI/CD pipelines, security scanning, and architecture governance.

→ Legacy system modernization frequently involves modularization, API layer creation, and service extraction.

→ Human expertise remains essential for architecture design, risk management, and production validation in AI-assisted refactoring.

FAQs: Refactoring Legacy Code with AI

1. What is AI-assisted refactoring in software development?

AI-assisted refactoring refers to using artificial intelligence tools to analyze, understand, and improve existing codebases. These tools help identify technical debt, suggest structural improvements, generate documentation, and assist with code transformations. Engineers review and validate the changes to ensure architectural integrity and functional correctness.

2. How does AI help modernize legacy systems?

AI accelerates modernization by analyzing large codebases, detecting dependencies, identifying code smells, and generating documentation for undocumented logic. It can also assist with code translation, framework upgrades, and test generation. This reduces the time engineers spend understanding legacy systems before starting refactoring.

3. Can AI automatically refactor legacy codebases?

AI can assist with code analysis, documentation, and initial refactoring suggestions, but it cannot independently modernize complex systems. Human engineers remain responsible for validating semantic behavior, edge cases, and architectural decisions. AI works best as a supporting tool within structured modernization programs.

4. What are the risks of using AI for legacy code refactoring?

Risks include incorrect code translations, overlooked edge cases, and overreliance on AI-generated output without proper review. Legacy systems often contain undocumented business rules that AI may not fully understand. Strong testing, human oversight, and structured review processes reduce these risks.

5. Which AI tools support legacy code modernization?

Several tools assist different stages of modernization. Code analysis platforms such as SonarQube, CAST Highlight, and Snyk help detect issues in large codebases. AI coding assistants like GitHub Copilot, Cursor, and JetBrains AI support refactoring tasks, while tools like Diffblue Cover and CodiumAI help generate automated tests.

Glossary

→ AI-Assisted Refactoring: The use of artificial intelligence tools to analyze, document, test, and partially transform software systems during modernization efforts.

→ Legacy Code: Older software systems that remain in production yet are difficult to maintain due to outdated architecture, dependencies, or a lack of documentation.

→ Technical Debt: The accumulated cost of shortcuts, outdated code, and architectural compromises that make software harder to maintain and evolve.

→ Language Model (LLM): A machine learning model trained on large datasets capable of understanding and generating natural language and programming code.

→ AI Coding Assistant: Tools integrated into developer environments that provide suggestions, generate code, and assist with refactoring tasks.

14 mins

14 mins

Talk to Our

Consultants

Talk to Our

Consultants Chat with

Our Experts

Chat with

Our Experts Write us

an Email

Write us

an Email