A factory line producing 1,000 units per hour loses more value in a 200 ms delay than in a full minute of planned downtime.

At that scale, time isn’t measured in seconds – it’s measured in decisions missed.

That’s why adoption of Edge AI inference becomes very important.

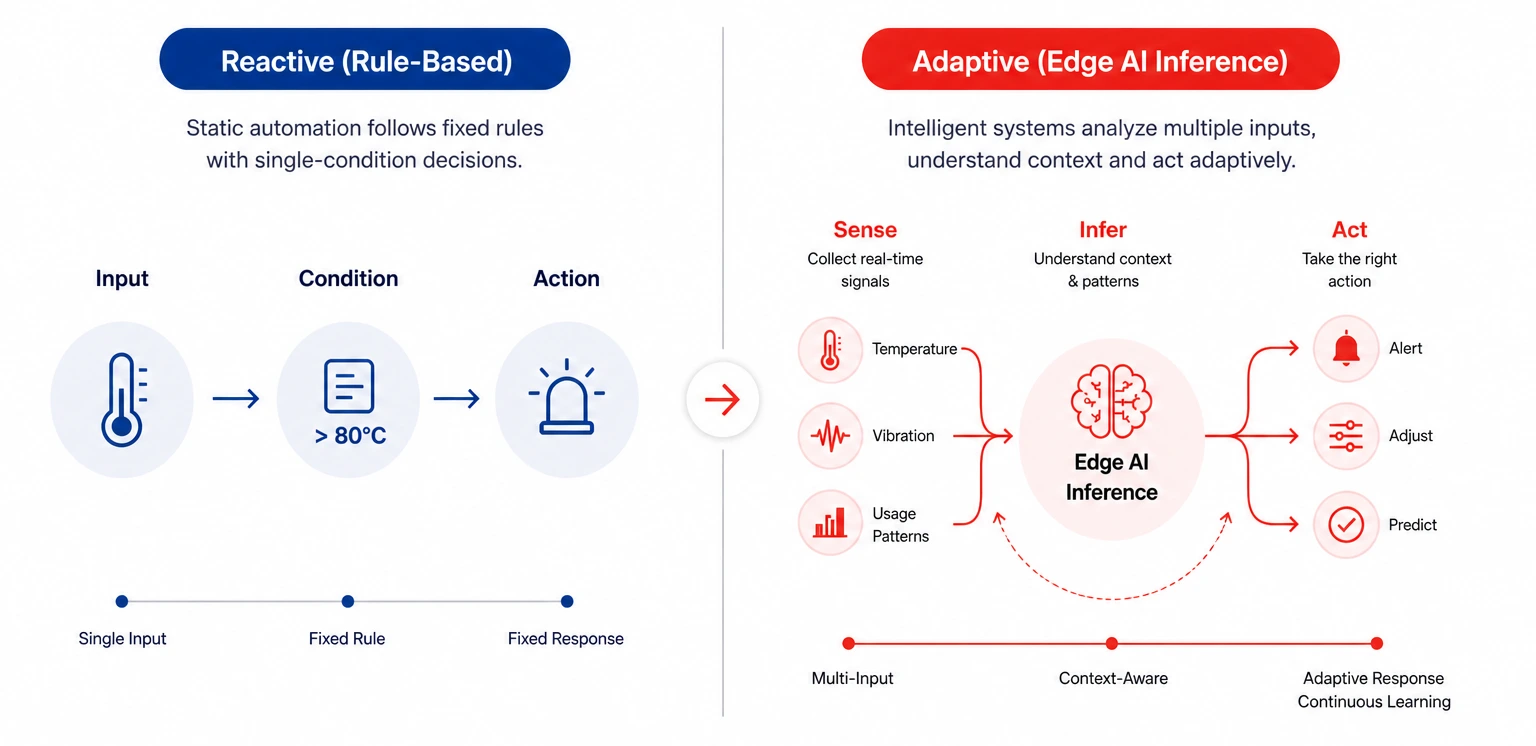

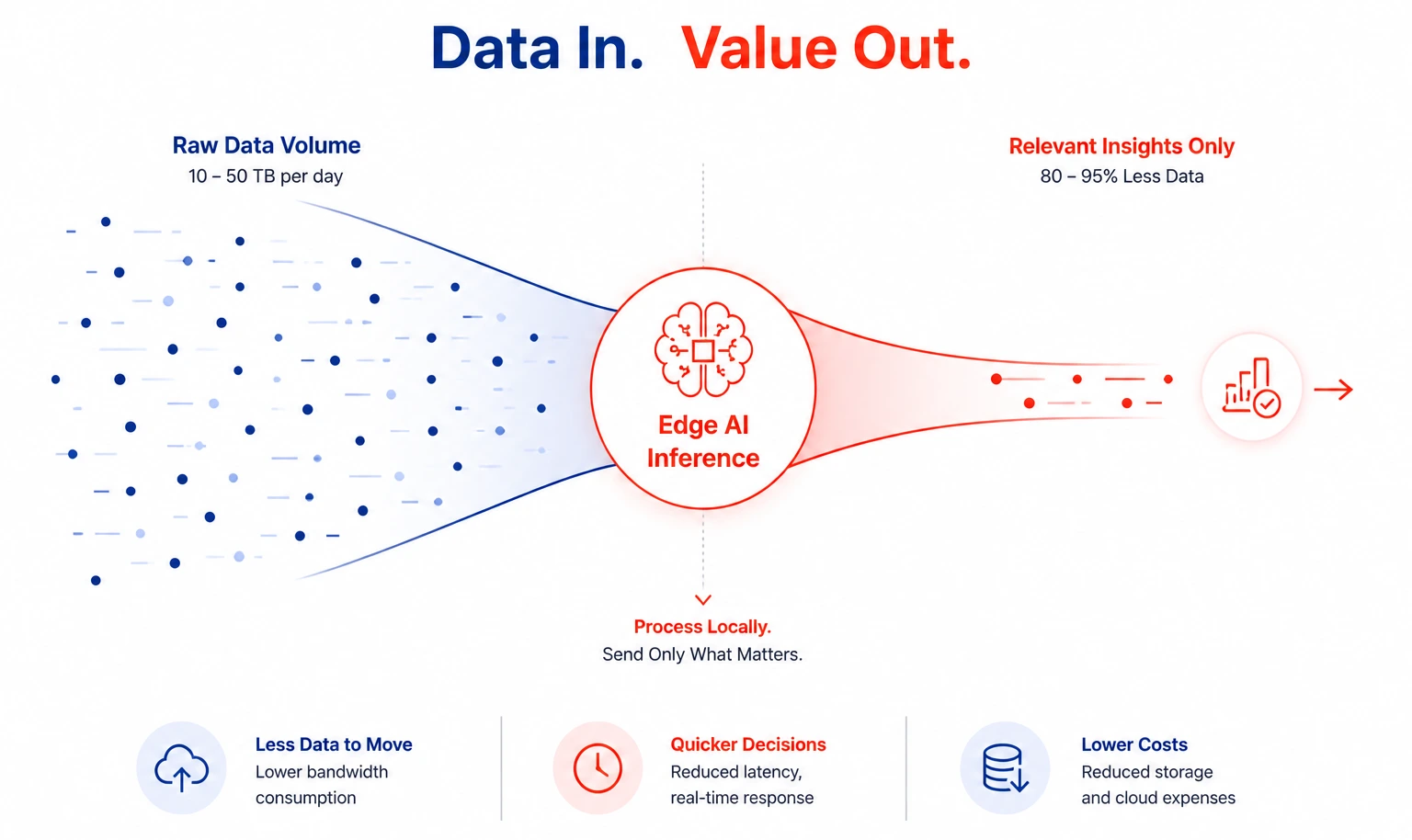

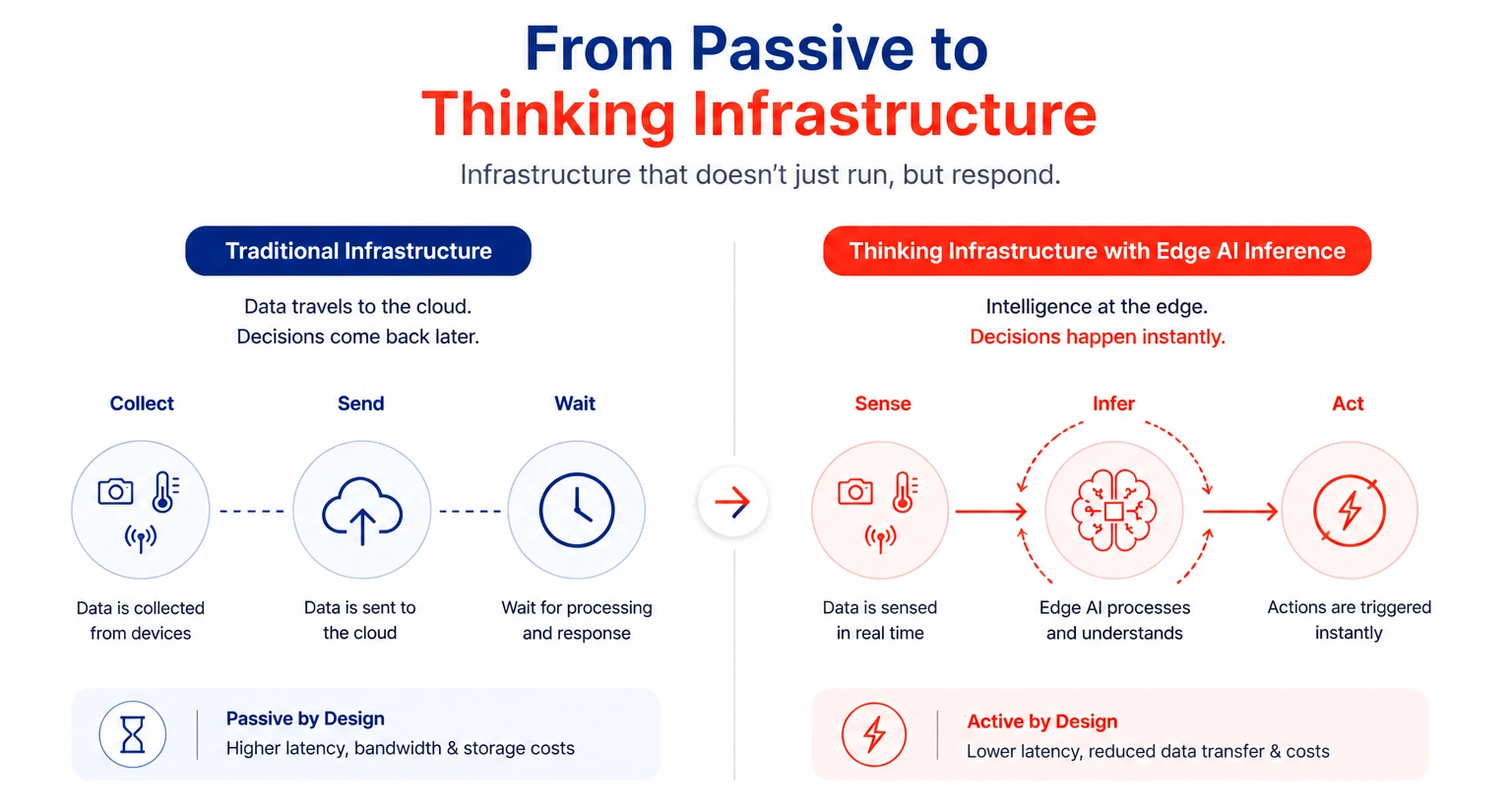

Instead of relying on distant processing, decisions are made exactly where the data is generated. Systems can sense, analyze, and act instantly – without waiting for instructions to travel back and forth.

And in environments where timing defines success, that difference becomes impossible to ignore.

12 mins

12 mins

Talk to Our

Consultants

Talk to Our

Consultants Chat with

Our Experts

Chat with

Our Experts Write us

an Email

Write us

an Email