When Edge AI starts expanding beyond a few locations, the shift is immediate.

What worked for 5 sites starts breaking at 50. At 200, it becomes operational friction. At 1,000, it turns into a full-time coordination problem.

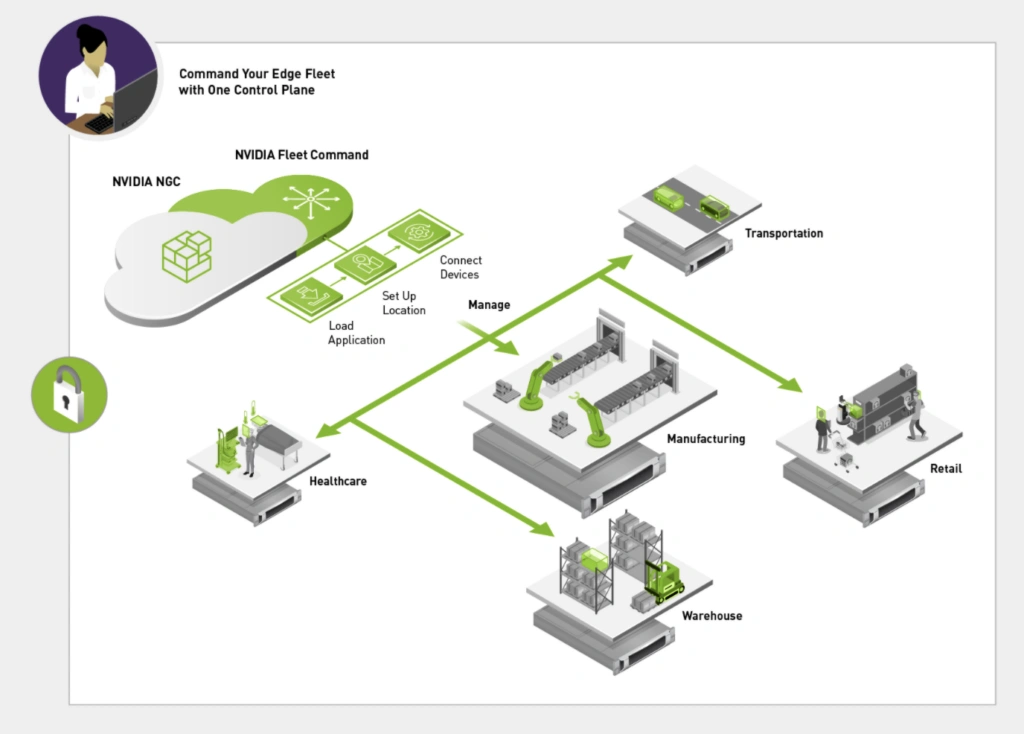

Because you’re no longer dealing with infrastructure in one place. You’re dealing with hundreds of distributed environments – stores, plants, warehouses, intersections – each running AI workloads that need to stay aligned, updated, and reliable.

That’s where teams start feeling the pressure.

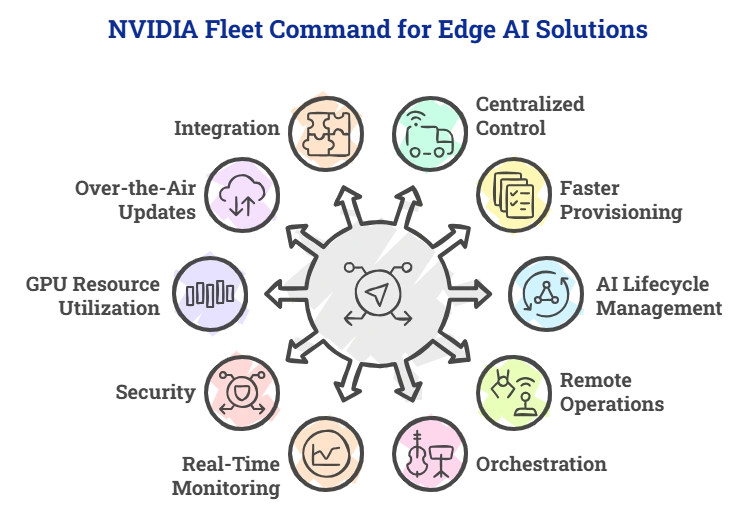

NVIDIA Fleet Command fits right into this point. It brings structure to something that otherwise spreads out quickly.

Let’s walk through how it helps, from an operational and system-level perspective.

13 mins

13 mins

Talk to Our

Consultants

Talk to Our

Consultants Chat with

Our Experts

Chat with

Our Experts Write us

an Email

Write us

an Email